– Fully open source streaming sensor solution with low latency wireless video and sensor data transmission.

– Ultra-wideband (UWB) sensor for real time positioning in 3D.

– IMU and magnetometer for orientation.

– Option to add Time-of-Flight 3D depth camera to your sensor solution.

– Sensor fusion with RGB, UWB, 3D ToF depths, IMU and magnetometer.

– Synchronize multiple cameras over ethernet for stereo or more.

– Build it yourself or let us do it for you.

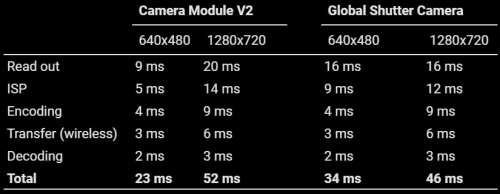

* H.264 baseline profile, Variable bitrate ~ 4 Mbit/s, RPi performance mode (CM4), Decoding on RTX3090, WiFi @ 80MHz bandwidth.

Low latency is vital for real time AI applications.

We have optimized for glass-to-python latency. For us, this means the time it takes from an image gets recorded on the sensor until it lands on the GPU memory of the remote AI processing machine ready for PyTorch, JAX or TensorFlow.

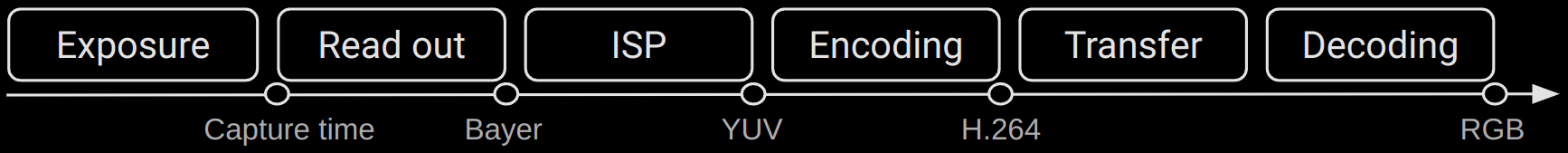

This pipeline contains camera exposure time, memory read out, image signal processing (from raw Bayer to YUV), video encoding, wireless transfer and finally video decoding on the remote AI machine.

Regular WiFi is subject to packet retransmissions which can result in variable latency. This can be amplified by the fact that regular WiFi sends ACK (acknowledgement) packets to verify data has been received.

Our open source software stack supports building your own drone-like mesh WiFi without association and ACK packets. This can guarantee constant latency.

Assemble your own ToF 3D depth camera. Our open source software has a custom 16-bit JPEG compression to allow wireless transfer of real time 30 FPS depth images.

The sensor has a resolution of 240×180 (30 FPS) and a measurement distance of 4 meters.

Find out when new products become available. We continuously improve and push new software and algorithms. Get notifications directly to your inbox!

©2024. MayFly AI. All Rights Reserved.