Packed with sensors:

Want to run advanced AI such as large vision transformers or even language models (LLMs) on your robot or drone but you are limited by edge compute?

Our low latency streaming sensor allows you to quickly capture and send data for remote AI processing.

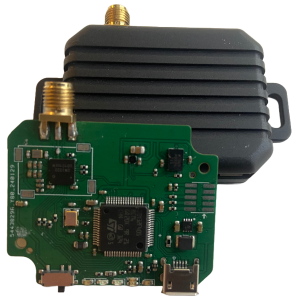

Never loose track of your robot or drone. Get absolute positioning (x,y,z) and orientation (quaternion) in real time. The pose sensor is based on UWB and IMU/magnetometer.

The wireless pose camera comes already equipped with the pose sensor for sensor fusion applications!

Our tech is fully Open Source. Everything you need to build or modify the wireless pose camera or pose sensor can be found on our GitHub page.

Test it out before leveling up on additional sensors. With only a Raspberry Pi and a Raspberry Pi camera, you can run our code and get RGB streamed wirelessly into Python.

| ⬤ ⬤ ⬤ |

from mayfly.sensorcapture import SensorCapture def show_data(): cap = SensorCapture('config.json') while True: sensor_data = cap.read() print(sensor_data) |

Get all your sensor data streamed wirelessly with low latency into Python. Ready to deploy into PyTorch, JAX and TensorFlow.

– Enhance your existing SLAM solution with low compute low power instantaneous absolute positioning using our pose sensor.

– Eliminate relocalization issues in your SLAM solution. Always know exactly where your robot is.

– Fuse Mono, Stereo or depth sensor with UWB positioning.

Find out when new products become available. We continuously improve and push new software and algorithms. Get notifications directly to your inbox!

©2024. MayFly AI. All Rights Reserved.